Member-only story

Best practices for Purview and a federated way of working

Many organizations consult me about how I see Microsoft Purview in relation to data mesh or a federated way of working. They ask me about sharing best practices for establishing a domain-oriented architecture. Let’s explorer in this blogpost how Purview can support your data governance ambitions.

Introduction

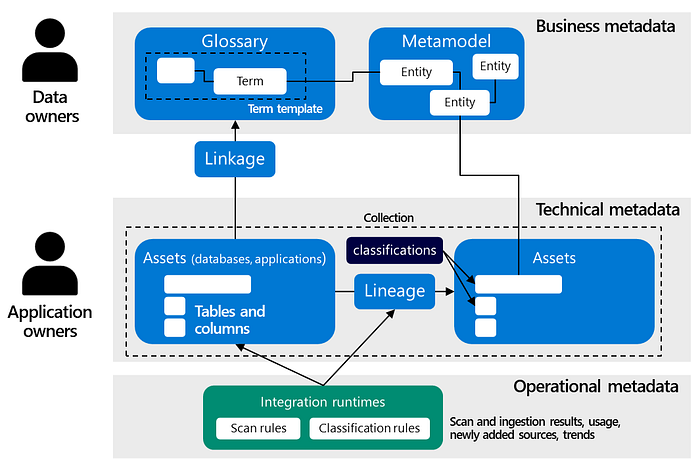

Purview is Microsoft’s unified data governance service that enables organization to manage their data at scale. It uses names such as glossary, collections and assets for organizing metadata. Let’s find out what these mean by starting with the basics first. For this, I propose a high-level design that shows an overview of the different metadata areas that are managed within Purview.

Glossary

On the top you, see business metadata, which is about providing (business) context to users. For managing business metadata, Purview provides a Glossary: a vocabulary for business users. At first sight, a glossary is a list of business terms with definitions and relations to other items. The glossary is important for maintaining and organizing information about your data. It captures domain knowledge of information that is commonly used, communicated, and shared in organizations as they’re conducting business.

There aren’t any rules for the precise size and representation of glossaries. They can stay abstract or high-level, but can also be detailed, describing carefully attributes, dependencies, relationships and definitions. A glossary isn’t limited to only a single domain, in fact it can cover countless applications or multiple databases from many domains. From this point of view, multiple applications can work together to accomplish a specific business need. It means that the relation between a glossary and data attributes is a one-to-many relationship.

A glossary can also include and capture more terms than the concepts representing the application or database itself. It can include concepts, which are used to make the context clearer, but don’t play a direct role (yet) in the application or database design. It may include concepts that represent future requirements, but didn’t find their way yet into the actual design of the application or database yet. Thus, a general best practice is to…